Table of Contents

Welcome to Evaluation and Value for Investment! Here's a thematic directory of posts

Hi, I’m Julian. I’m on a mission to disrupt value-for-money assessment. We can make it more credible and useful by combining insights from evaluation and economics. I’ve developed an approach that’s used globally to evaluate complex and hard-to-measure policies and programs, and I’m here to share it with you.

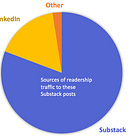

Subscribe to Evaluation and Value for Investment if you’re interested in using evidence and explicit values to make good decisions. I aim to write things that are useful, interesting, and fun.

It’s free to subscribe. Most articles are free for the first two months after posting. Paid subscribers have access to the full archive. There’s also a free 7-day trial option.

Here’s a thematic summary of the main topics I’ve covered to date:

For reasons outside of my control, the following directory is easier to read in your web browser than in the Substack app.

1. The Value for Investment approach

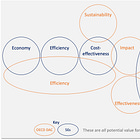

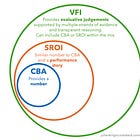

I developed the Value for Investment (VfI) approach through doctoral research, to bring greater clarity to answering evaluative questions about how well resources are used, whether enough value is created, and how more value may be created from the resources invested in policies and programs. VfI is my contribution to better value-for-money assessment.

Start here: a fun and easy introduction to VfI:

What is VfI? Quick overviews:

Practical VfI guidance documents:

Practical tips on designing a VfI evaluation:

Practical tips on conducting a VfI evaluation:

VfI principles and theoretical foundations - the what and the why:

Power dynamics and VfI:

The five Es - short reads on economy, efficiency, effectiveness, cost-effectiveness, and equity:

VfI case examples:

Other VfI topics:

2. Rubrics and evaluative reasoning

Rubrics and evaluative reasoning are the bedrock of the VfI system. A rubric is a matrix of criteria (aspects of value) and standards (levels of value) that evaluators use as a scaffold to make sense of evidence and render a warranted value judgement. In other words, a rubric is a way of making evaluative reasoning explicit.

Posts about rubrics and evaluative reasoning:

3. Program theory and value propositions

VfM assessments are too often fixated on the money and unclear about the value. We can address this by re-framing policies and programs as investments in value propositions. That’s why I prefer the term Value for Investment. Value propositions are useful constructs because we can define them and evaluate how well they’re met.

Posts about program theory and value propositions:

4. Economic methods of evaluation

VfI is an inter-disciplinary approach, because “good resource use” is a shared domain of evaluation (determining the goodness of things) and economics (studying how people choose to use resources). The VfI approach encourages the inclusion of economic methods of evaluation within a wider mixed methods framework, where feasible and appropriate.

Posts about cost-benefit analysis:

Posts about Social Return on Investment and CBA:

Posts about other economic concepts and methods of evaluation:

5. Cubist Evaluation

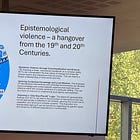

Cubist Evaluation seeks to challenge and disrupt conceptions of rigorous evaluation in complexity by encouraging us to think beyond methods, beyond measurement, and beyond objectivity. It involves mixing methods and reasoning strategies, honouring multiple perspectives, challenging dominant narratives, contributing new meaning, and opening up possibilities. It highlights a set of dispositions that we can choose to bring to evaluation and Value for Investment.

Posts about Cubist Evaluation:

6. Causality and contribution

Evaluation and causation are two different tasks, with different logics and methodologies. VfI contributes, in particular, to applying the valuing part of evaluation to value-for-money questions - how to define what good resource use and value creation mean in a context, how to use those definitions to identify evidence requirements and select methods, how to make and communicate evaluative judgements.

Addressing whether an intervention caused or contributed to an observed set of outcomes is usually an important task within a VfM assessment - because, before we can determine the value of an impact, we need to ascertain whether it is valid to claim impact and, if so, to what extent and in what ways. However, I have only written a few articles on causality because VfI doesn’t prescribe causal approaches. Evaluators and researchers have developed a diverse range of strategies and methods for addressing causality and contribution in different contexts, and they are all on the table as far as I’m concerned. What matters in a VfI evaluation is that the causal questions are addressed, and that the methods used to address them are selected according to context, with possibly more than one method used to support triangulation.

Here are my posts on this topic, which are by no means exhaustive. For more information on causal approaches, see the Better Evaluation website.

7. Other topics

AI tools in evaluation

Policy topics

Methodological topics

Other stuff

7. Free articles

It’s free to subscribe. Most articles are free for the first two months after posting. Paid subscribers have access to the full archive.

However, I have kept the following articles outside the paywall for all to enjoy.