Beyond the hierarchy

No single method of VfM assessment is ‘best’

Introduction

When it comes to evaluating value-for-money (VfM), methods are sometimes perceived to sit on a hierarchy with cost-benefit analysis (CBA) at the top, qualitative methods at the bottom, and a range of other options such as cost-effectiveness analysis (CEA) somewhere in between.

The assumption seems to be that valuing impacts in monetary units should be the ‘gold standard’ (because monetised benefits can be directly compared with costs), and if that’s not possible, we move down the ladder to other ways of quantifying value (such as quality-adjusted life years, also known as QALYs), or if we’re ‘desperate’, a framework like some or all of the 5Es (economy, efficiency, effectiveness, equity, cost-effectiveness), indicators, or rubric-based evaluation. In cases of ‘extreme desperation’ we might even turn to qualitative methods…😱

But this view is flawed.

No method is superior in all cases.1 CBA has strengths and limitations, as do all methods. Many factors influence method selection beyond whether or not benefits can be monetised. For example, QALYs offer advantages over monetisation of benefits in some circumstances.2

Moreover, these various approaches aren’t direct substitutes for one another; they give us different information. For example, qualitative evidence can contribute essential insights that we won’t get from numbers, and vice versa.3

The 5Es aren’t even a method or approach - they’re just a framework of criteria that some organisations favour - so even if there was a hierarchy of methods they wouldn’t belong in it.

Methods should be chosen contextually. There’s no natural hierarchy. Insidiously, the choice of method can affect the conclusions reached and consequently the decisions taken.4 To mitigate this risk and ensure valid conclusions are reached, VfM assessments of complex programs are often strengthened by using multiple methods together rather than pitting them against one another.

So, a key question is: How do we determine a fit-for-purpose mix of methods for a given situation?

Mixing methods thoughtfully

Mixed methods can strengthen evaluation by helping us gain a more comprehensive understanding of a program’s value and unpack the story behind the numbers. For example, as Jennifer Greene argued, comparing different pieces of evidence can reveal where they agree and where they don't - a process called triangulation. We can get a broader and deeper view of a program’s value by drawing on the relative strengths of different sources. We can use the results from one method to inform the design of another.

But mixing methods creates a second challenge: How do we synthesise different types of evidence into a coherent VfM judgement?

The General Logic of Evaluation helps address both challenges: selecting the mix of methods and synthesising their findings

Rather than treating evaluation methods as competing alternatives, we can follow a structured decision-making process. The General Logic of Evaluation helps address the challenges of choosing methods and synthesising findings by guiding us through the steps of:

Identifying criteria - the aspects of VfM that matter (e.g., affordability? efficiency? equity? sustainability? cost-effectiveness? etc)

Defining standards - levels of VfM; for example, what does ‘enough’ value look like for each of the criteria?

Determining what kinds of evidence are needed and will be credible to address the criteria and standards, and what mix of methods should be used to gather and analyse the evidence

Gathering and analysing the selected evidence, and synthesising findings through the prism of the criteria and standards to reach a clear conclusion.

As I’ve said before, there’s more to evaluation than just this logic. For example, value judgements can also be supported by intuitive or deliberative reasoning - and I argue that these processes are not only compatible with criterial reasoning but mutually enhancing. I see meaningful stakeholder engagement and power-sharing in these processes as ethical imperatives as well as enhancing evaluation validity, credibility and use. These nuances don’t diminish the importance of the general logic, which I think is always present in evaluation, whether visible or not, and should be made explicit.

Why this approach matters

For boards and senior leaders, this approach means method choices are made visibly and defensibly, rather than defaulting to whatever is assumed to be “most rigorous” on paper.

The general logic of evaluation is not a method or tool - it is a way of understanding how evaluative claims are structured. Its practical uses go further than that. In addition to underpinning evaluative judgements, the logic can form the basis of a systematic process for selecting methods, helping to ensure that evaluations and VfM assessments focus on what matters, not just what’s easiest to count, measure or monetise.

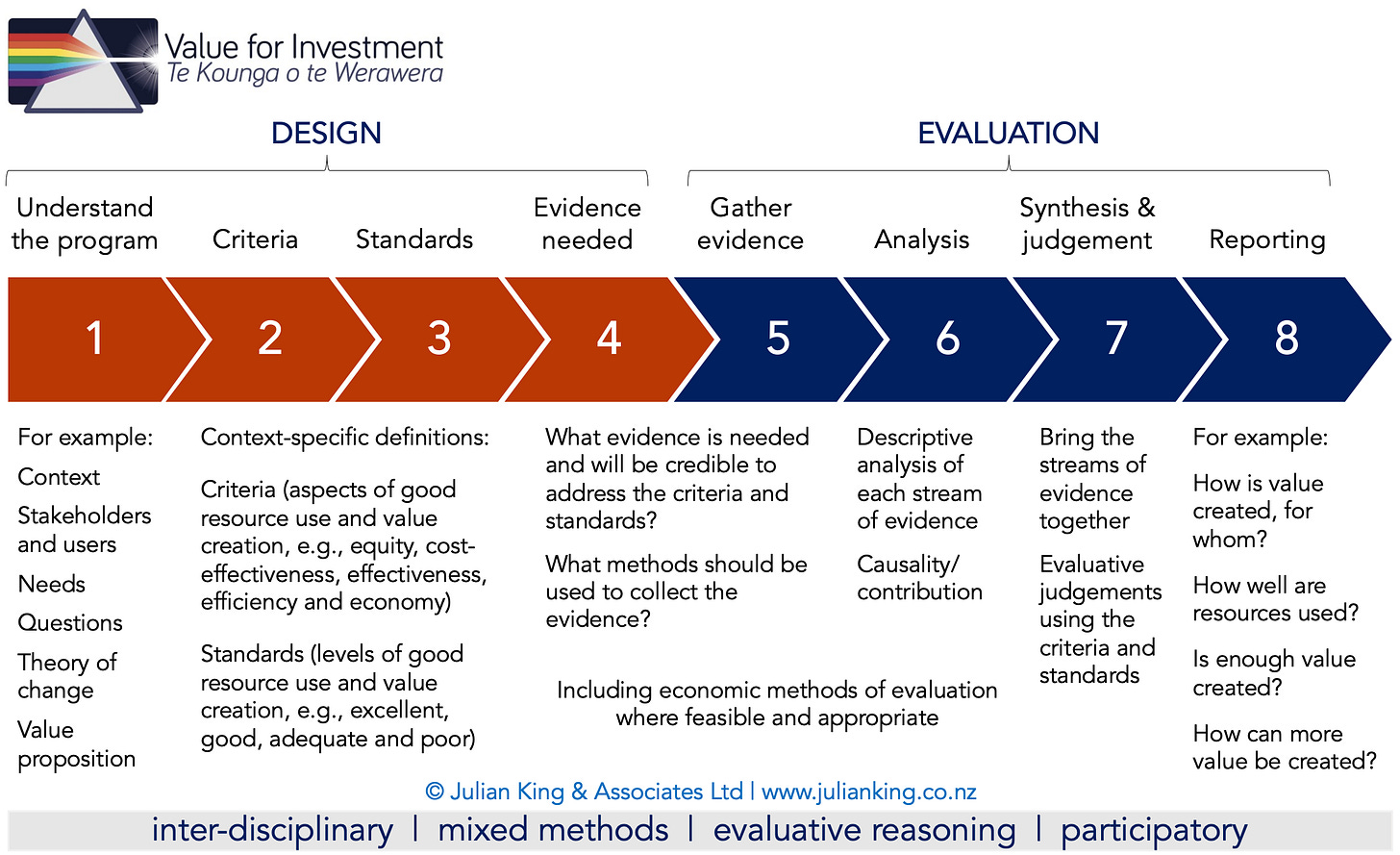

That is why the Value for Investment system has its series of steps, as summarised in the diagram below - developing criteria and standards with stakeholders before determining evidence needs and selecting methods. It’s a process for implementing the general logic of evaluation, scaffolding not only evaluative judgements but also methods selection.5

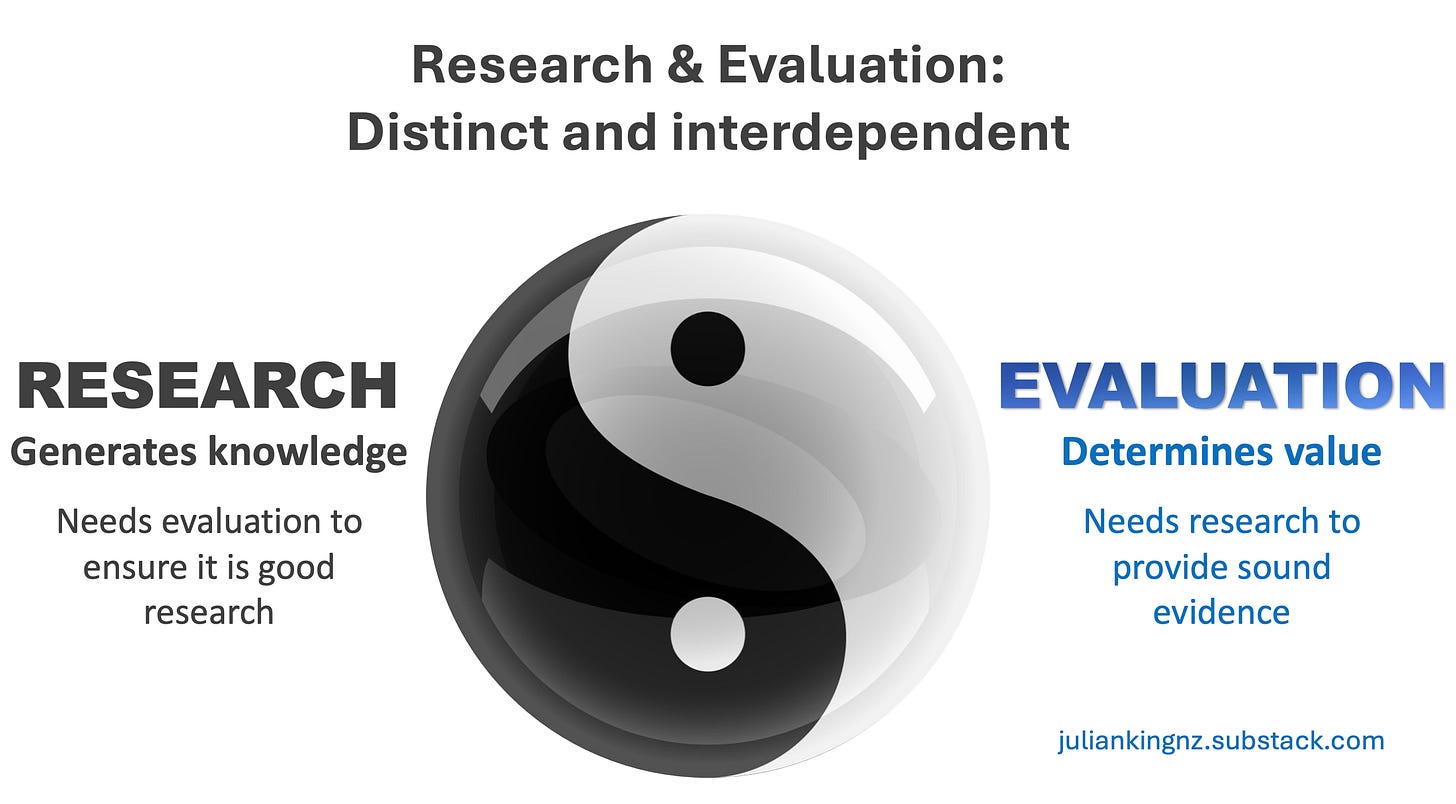

This process separates evaluative reasoning (how we make value judgements - steps 2, 3, and 7) from methods (how we gather and analyse evidence - steps 4, 5 and 6), highlighting an interconnected, interdependent relationship between research and evaluation. Reasoning doesn’t compete with methods; we need both.

A unifying framework, not just another method

VfI was developed to apply to any evaluation of resource use and value creation. The point isn’t to place my approach at the top of a hierarchy, but to move away from hierarchical thinking altogether. VfI isn’t a method and it’s not in competition with economic or other methods. It’s a system to guide the use of existing methods and tools, with a set of principles and a process to support contextually responsive evaluation. It provides structure and a sense-making framework for defining good VfM, selecting an appropriate mix of methods, making transparent judgements from the evidence, and presenting clear answers to VfM questions. Stakeholder engagement contributes at every step.

In practice, using the steps of the general logic of evaluation in this way often leads to decisions to combine multiple methods rather than relying on a single source of evidence. However, if this process does lead to a high-status method like CBA being selected and used as a stand-alone method, the general logic helps ensure that the choice is justified with sound rationale.

Bottom line

Good VfM evaluation isn’t simply about the ‘best’ method - it’s about making valid judgements, based on explicit values and credible evidence, in collaboration with the right people. The General Logic of Evaluation underpins this endeavour. VfM assessments should use this logic to support evaluation design, methods selection and conclusions.

Also see…

A stepped approach to applying the general logic of evaluation can help you clarify information needs and consider an appropriate mix of methods, but you also need to understand the different methods including their strengths and limitations. Check out my Substack articles on these methods often used in VfM assessment…

Acknowledgment

Thanks to Andrew Partington for peer review of this post. Any errors or omissions are my responsibility alone.

I don’t address causal inference methods in this post but my view about that can be summed up in a similar way - no one method is superior in all cases:

The tensions between economic and evaluation-specific approaches to valuing have been likened to the ‘‘quant-qual’’ debate and the causal wars, in that both controversies involved opposing sets of worldviews in which one side maintained that a particular set of methods (quantitative data analysis and randomized controlled trials, respectively) represented a gold standard while the other argued that methods should be tailored to context. In both cases, the latter sides’ appeals to a higher order, overarching logic offered a basis for a set of principles framing the dominant methods as conditionally valid and sometimes appropriate contributors to evaluation, rather than being universally superior methods. Although these debates are not over, evaluators are at least armed with robust frameworks to design and defend context-appropriate methodologies. (Me, 2017).

In some contexts, QALYs may be preferable to monetisation of benefits - illustrating my point that there’s no natural hierarchy. QALYs combine quality and quantity of life in a single metric, without attempting to include other aspects of welfare. Advantages of QALYs include, among other things: a) estimating and representing population preferences for different health states and how people see these as affecting their capability to live their lives; and b) providing a standardised utility measure that enables comparisons across population groups, diseases, and interventions, thereby informing rational resource allocation choices across different types of spending options within a budget or funding pool. Although monetary valuations may appear standardised, there are so many different ways of converting impacts to monetary valuations that real-world comparisons between different spending options on the basis of CBA results can be more problematic.

Consider a CBA of a community development initiative. Monetised benefits represent an estimate of how much people may be willing to pay for the initiative and its impacts, but don’t tell us about the experiences, emotions or motivations that sit behind their valuations, why some people place greater value on the initiative than others, nor suggestions to make the initiative more valuable. By incorporating a mix of quantitative and qualitative methods of data collection and analysis into the evaluation, we can benefit from their complementary insights.

For example, CBA and cost-utility analysis (CUA) are based on different models of utility - that is, they start out with different assumptions about how people value things. Buchanan and Wordsworth (2015) found that the choice of economic evaluation approach could impact on decisions about whether to adopt a new healthcare intervention in about one in five studies that applied both approaches. I think their finding supports my arguments that: a) economic methods of evaluation provide important information but an evaluative judgement still has to be made; the numbers aren’t the judgement, they’re pieces of evidence requiring human deliberation to reach evaluative conclusions; and b) mixed methods mitigate risks of making a judgement from a single piece of evidence.

When they bring out the hierarchies, we bring out the garden!

Incidentally, the general logic of evaluation has nothing to do with logic models. They are completely different things. I learned in a conversation today that this point may be worth clarifying.