Using economic methods evaluatively

A summary of my 2017 article in the American Journal of Evaluation

Early in my PhD adventure, I reviewed evaluation and economic literature, developed a conceptual model for interdisciplinary evaluation to address value-for-money questions, and published it in a journal. The full article can be downloaded from the following link. You can read the abstract and references for free, but the article is paywalled:

King, J. (2017). Using Economic Methods Evaluatively. American Journal of Evaluation, 38(1), 101–113.

Journal articles aren’t always widely read, for various reasons including limited marketing and restricted access. As an author it can be a bit frustrating because writing an article involves significant unpaid toil, after which only a select few people become aware of its existence, a subset of them have access to the journal, and of those, a handful might actually read the article. Also, academic papers can be a bit of an acquired taste, formal and lengthy and zzzz… So, as a public service, here’s a summary, at about 15% of the word count of the original.

Using economic methods evaluatively: the short version

There’s increasing interest in evaluating value for money (VfM) of public policies and social investments, but no agreed definition of what VfM means and ongoing debate about how it should be evaluated.

My mission was to bring some clarity to these issues.

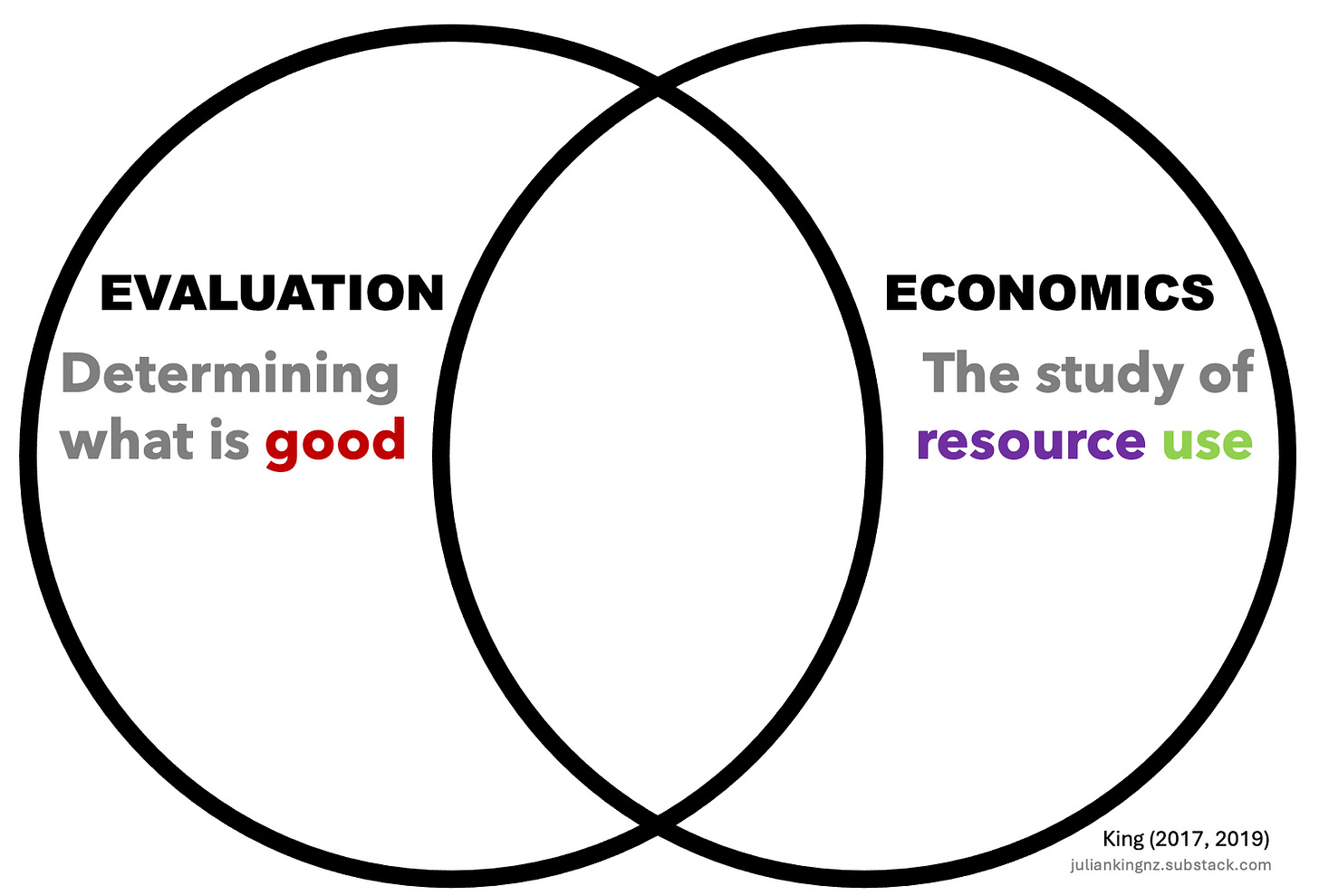

I introduced the term “value for investment” (VfI1), defined as “the merit, worth, and significance of resource use”. It is an interdisciplinary concept at the intersection of evaluation and economics, suggesting that both disciplines have a role to play in determining whether something is a good resource use.

While economic methods of evaluation are widely used in determining whether a resource use is good, all methods have strengths and limitations. Observing that economic evaluations are often siloed from other methods, I proposed a more integrated approach. I argued that economic evaluations should be combined with other evidence and values, within a broader evaluative reasoning framework.

To illustrate these concepts, I presented a scenario of a fictitious non-government organisation (NGO) providing alcohol and drug rehabilitation services. The NGO operated a social enterprise café serving coffee and snacks to the public while training clients in customer service, and generating profit for reinvestment in rehabilitation services. What if we were to assess the cafe’s VfM using economic methods alone?

Economic methods of evaluation

I outlined various economic methods used in evaluating social programs. I’ve detailed each of these methods in previous Substack articles - follow the links for more:

Cost-effectiveness analysis (CEA) measures costs in monetary terms and consequences in natural or physical units, producing a cost-effectiveness ratio that compares costs to outcomes. For example, we could calculate the average cost per café trainee who successfully completes their training, which gives us a cost-per outcome figure for that result. But how would we know if the cost per trainee is good? Ideally we would compare the costs and consequences of the cafe with an alternative investment.

Cost-utility analysis (CUA) is similar to CEA but includes utility measures such as quality-adjusted life years, offering a more comprehensive way to summarise health-related benefits in a single measure. We could use this approach to see if the café leads to improvements in quality of life for trainees at a reasonable cost per incremental gain.

Cost-benefit analysis (CBA) values both costs and consequences in monetary terms, making it highly versatile. We could use this approach to consider the financial performance (profits or losses) of the café as well as impacts on trainee incomes, and even some intangible benefits like life satisfaction if we can value them in monetary units.

Using the term “economic methods of evaluation”, I noted that these methods belong to the field of evaluation as much as economics; for example, they can be understood as ways of implementing the General Logic of Evaluation (synthesising criteria, standards, and evidence to reach evaluative conclusions). However, I argued that economic methods of evaluation often need to be augmented with other methods to fully evaluate the merit, worth and significance of resource use.

I acknowledged strengths of economic methods in identifying, quantifying, valuing, and comparing the costs and consequences of social investments. These strengths include modelling and sensitivity analysis to explore implications of uncertainty, and communicating value in ways that audiences often find compelling.

While CBA is sometimes said to be the "gold standard" method for evaluating VfM, because of its ability to incorporate costs and benefits in a single metric, I argued that there are no gold standards in evaluation and that CBA has strengths and limitations. For example, it assesses value through the lens of Kaldor-Hicks efficiency.2 Although this contributes unique insights, it is only one criterion. What if additional criteria also matter, such as equity, relevance, sustainability, stewardship of resources, and productivity? We would need additional methods to help us consider them fully.

Given these and other limitations now well-covered in this Substack series, I concluded that CBA isn’t enough on its own. I proposed that an overarching framework - one that incorporates economic methods alongside other methods - is necessary to fully assess the merit, worth, and significance of resource investments in social policies and programs. This, in turn, requires a synthesis process to combine multiple values and sources of evidence and support evaluative judgement-making. What could such a process look like?

Evaluative reasoning

Evaluative reasoning is the process through which evaluators apply the logic of evaluation, judging value on the basis of evidence and argument assisted by explicit criteria (defined aspects of value) and standards (defined levels of value) - the theory and practice of which I’ve started to unpack in previous posts on Substack.

In the article, I contrasted two alternative approaches to synthesising criteria, standards and evidence (though there are more) - which I labelled “quantitative” and “qualitative” valuing and synthesis - focusing on their respective strengths and limitations.

Quantitative valuing and synthesis (also known as Multi-Criteria Decision Analysis or Numerical Weight and Sum) uses a system of numerical weights to synthesise individual scores given to each criterion. For example, for the social enterprise café, we could assign 50% of the total score to trainee outcomes, 30% to customer satisfaction and brand value, and 20% to financial outcomes. If trainee outcomes are rated 4 out of 5, customer satisfaction and brand value rate 2/5, and financial outcomes 3/5, then the total score would be (50% x 4/5) + (30% x 2/5) + (20% x 3/5) = 64%. But is 64% good? We would need some basis to judge this. This approach would work best when we are explicitly comparing the social enterprise café with one or more alternatives, so we can identify the option with the highest score. We would also need to be able to justify the numerical weights and scores; arbitrary numbers can lead us to misleading conclusions. These (and other) conditions often aren’t met, rendering quantitative valuing and synthesis infeasible in many cases.

Qualitative valuing and synthesis uses ordinal scaling and can support judgement-making from both quantitative and qualitative evidence. For example, rubrics (introduced to the field of evaluation by E. Jane Davidson) could be used to evaluate the social enterprise café, defining “excellent”, “good”, “adequate” and “poor” performance in terms of trainee outcomes, customer satisfaction, brand value, de-stigmatising addiction services, and financial results respectively. I argued (after Scriven) that an ordinal approach with qualitative definitions for each criterion and standard is feasible in a wider range of circumstances and allows for a more nuanced evaluation.3

Implications for practice

I argued that this proposed evaluative model of VfI had several implications for evaluation practice. I proposed that evaluations of resource use should:

Integrate theory and practice from evaluation and economics in a multidisciplinary approach;

Apply explicit evaluative reasoning to make transparent judgements about the merit, worth, and significance of resource use;

Select methods according to context, for any mix of qualitative, quantitative, and economic methods of evaluation; and

Follow program evaluation standards to ensure, for example, that VfI evaluations are not only conceptually, methodologically and practically sound but also inclusive, responsive, contextually viable and meaningful for people whose lives are affected.

Practical guidance was needed to put these principles into action, which I subsequently developed and tested.

Conclusions

By using explicit evaluative reasoning to integrate insights from economic analysis with other evidence and values, evaluators can retain strengths of CBA while addressing broader considerations like social justice and process issues. Combining multiple methods offers more comprehensive evaluations, particularly for complex social investments.

Building on this article and subsequent work, I advocate for the use of mixed methods, including economic methods where appropriate, within an overarching participatory evaluative reasoning process to evaluate whether social investments provide good value for the resources invested in them.

To find out more

The article summarised above was an important foundation for the development of the Value for Investment approach. Although the original article is paywalled, you can also find it in my open-access PhD dissertation.

You can grab free resources through the VfI portal on my website and here on Substack.

My thinking behind the term “value for investment” was to reframe our view of policies and programs, seeing them not so much as monetary costs but as investments involving multiple different kinds of resources (monetary and non-monetary), and creating diverse kinds of value such as social, cultural, economic and environmental value. Money is a valid way of representing value, but it’s a choice and its fitness-for-purpose depends on the context.

Kaldor-Hicks efficiency is an elaboration on Pareto efficiency. An allocation of resources is said to be Pareto-efficient if there is no alternative allocation in which one person can be made better off without making somebody else worse off. The Pareto criterion is too restrictive to be practical for evaluating real-world policy proposals, which usually produce winners and losers. Kaldor (1939) modified the Pareto criterion by arguing that for an action to be in the public interest, those who gain from it should be able (in principle) to compensate those who lose from it, and still find the action worthwhile. However, the compensation does not actually have to take place. Hicks (1939) added that the losers must not be able to bribe the winners to forego the action. For a lucid explanation of how Kaldor-Hicks efficiency came about, see this.

Although I didn’t go into the social dynamics of evaluative reasoning in this article, the alternative approaches to synthesis discussed here do not make evaluative judgements; people are responsible for doing that, as featured in last week’s post. Which people to involve, and how to involve them, are crucial decisions in evaluation design. Approaches to valuing and synthesis should be selected for their fitness-for-purpose to support human deliberation and sense-making.

Hi Julian. I’m working on a ROI project and looking to monetize home visiting outcomes that economists typically avoid because they are difficult. Would you be interested in any consulting on a project like this? Thanks, Craig